Concept Analytics versus Near Duplicate Indexing and Some Suggested Uses

“It is not unusual for clients and even representatives of vendors to confuse concept similarity with near duplicate detection technology, but they are distinctly different and support different review goals.

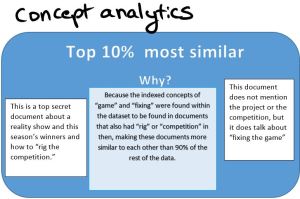

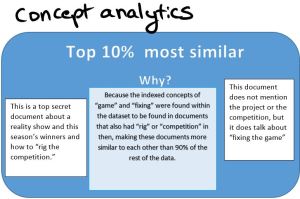

Content Analytics (implemented in kCura’s Relativity using Content Analyst) creates a multi-dimensional web of relationships among words and between words and documents. It calculates a similar score from these relationships using an algorithm that does not care about the order of words, or how they are used in sentences. This is part of the strength of this ‘concept’ index, because it will discover interesting documents that no conventional index would never find. For instance, it is possible to find a highly similar document that has none of the same words in it than the document used as an example for search, so long as enough enough words found in the target document are indexed as closely related to the words in the source document to make that document highly similar relativity to all other documents in the database. Another important distinction between Analytics and Near Duplicate detection is this concept that all similarity is relative to other documents in the universe of the index that was created.

By contrast, Near Duplicates (implemented in kCura’s Relativity using Structured Analytics) are discovered by comparing the text of two documents and analyzing the difference of text between the documents directly. The engine generally determines a “”pivot”” or “”principal”” document against which all similar documents are compared and a percentage similarity calculated. For instance, the final draft of this post might be calculated as the pivot document, and all previous versions might be considered near duplicates. Most near duplicate engines allow the administrator to set a near duplicate threshold, where if a document is more similar than, say, 90 percent, it becomes part of that near duplicate grouping. Groupings are useful, because they allow a reviewer to make a substantive call on a single document and have those choices propagated to all near duplicate documents.

Below are some uses for each kind of similarity engine to demonstrate their suitability for different review workflows:

Concept Indexing

Best for:

- Identifying interesting documents early based upon seed documents

- Identifying documents most relevant to external information, such as a web site, a case summary, or an abstract

- Exploring word relationships to discover terms of art

- Grouping conceptually similar documents for more conceptually similar review batches

- Locating potentially sensitive documents based upon similar subject matter

Not recommended for:

- Privilege filtering — because privilege can be broken by a single email recipient, and attachments can influence the privilege status of other family docuements.

- Any kind of searching or filtering that is dependent upon header information, such as dates and email parties.

- De-duplicating.

Near Duplicate Indexing

Best for:

- Identifying multiple drafts of a key document, such as a contract

- De-duplicating documents that have been collected in a manner that has changed their hash values (such as when emails collected as loose files need to be compared versus properly-collected email), or where de-duplication had been done on a per-custodian basis (using a high similarity threshold)

- Reviewing only identified pivot documents for less relevant documents

Not recommended for:

- Predictive coding

- Finding similar documents that do not share large amounts of text with the example documents.

- Note that email threading may provide an alternative path for de-duplicating email

“